Introduction

A greedy algorithm always makes the choice that looks best at the moment. It makes a locally optimal choice in the hope that this choice will lead to a globally optimal solution.

Greedy algorithms do not result in optimal solutions always but for many problems they do. A problem can be solved by Greedy Algorithm if it exhibits optimal substructure. A problem exhibits optimal substructure if an optimal solution to the problem contains within it optimal solutions to subproblems. Optimal substructure is a necessary property of both Greedy and Dynamic Programming classes of problems.

But then when should we apply Dynamic Programming and when Greedy?

Turns out Dynamic Programming has another important condition: Overlapping Subproblems. It simply means that the space of subproblems must be “small” in the sense that a recursive algorithm for the problem solves the same subproblems over and over, rather than always generating new subproblems. When a problem doesn’t have overlapping subproblems, we might want to solve it by greedy technique.

Huffman

Huffman code is used to compress the file. For example: Consider the word “Hello”.

The bit representation of “Hello is “: 01101000 01100101 01101100 01101100 01101111

It means, we will need 40 bits to store/transfer “hello”.

Huffman was a student at MIT when he discovered that its cheap to transfer/store when we already know the frequency of the characters in the given text and then use less bit to represent more common characters. In our case, “l” occurs twice while “e”, “h”, “o” occurs once in the given text. Frequency table would like below:

| Character | Frequency |

| H | 0.2 |

| e | 0.2 |

| l | 0.4 |

| o | 0.2 |

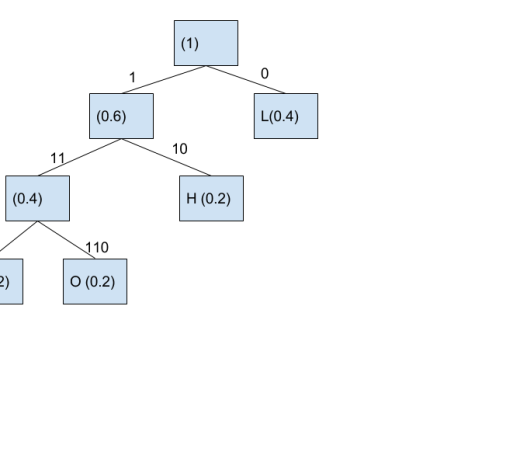

Now, we need to build Huffman tree using the frequency distribution provided.

How do we do that?

a. Make a node of each character and respective frequency. In the code shown below, TreeNode class is the node.

b. Store each node in the priority queue. Priority queue, because it will always give us nodes sorted by frequency. Precisely why TreeNode implements Comparable as it will give us a compareTo function which will be used by priority queue to give us nodes sorted on the frequency.

c. Now, take two smallest nodes, and make it left and right tree of a new TreeNode and again push it in priority queue. Do this, until just one element remains in Priority Queue.

d. Now, the only TreeNode remaining in Priority Queue is our Huffman Tree. Assign bits to each branch.

Huffman Tree looks like this now:

Here’s the java code to do the same.

| package com.tutorial.protobuf; | |

| import java.util.HashMap; | |

| import java.util.Map; | |

| import java.util.PriorityQueue; | |

| public class Huffman { | |

| public static void main (String [] arguments){ | |

| String input = "hello"; | |

| //Step 1: Find the count of the characters in the input string | |

| int[] countOfCharacters = getCountOfCharacters(input); | |

| //Step 2: Build Huffman Tree using the count of characters | |

| TreeNode huffmanTree = getHuffmanTree(countOfCharacters, input); | |

| //Step 3: Build Huffman Code using the Huffman Tree | |

| Map<String, String> huffmanCode = new HashMap<>(); | |

| getHuffmanCode(huffmanCode, huffmanTree, "", true); | |

| //Step 4: Encode the String using Huffman code | |

| String encodedString = getEncodedString(huffmanCode, input); | |

| System.out.println("Encoded String is "+encodedString); | |

| } | |

| private static String getEncodedString(Map<String, String> huffmanCode, String input) { | |

| char [] inputCharArray = input.toCharArray(); | |

| StringBuffer encoadedString = new StringBuffer(); | |

| for(int i = 0; i < inputCharArray.length; i++){ | |

| encoadedString.append(huffmanCode.get(String.valueOf(inputCharArray[i]))); | |

| } | |

| return encoadedString.toString(); | |

| } | |

| private static void getHuffmanCode(Map<String, String> huffmanCode, TreeNode huffmanTree, String bit, Boolean isLeft) { | |

| if(huffmanTree == null) | |

| return; | |

| if(huffmanTree.isLeaf()){ | |

| if(isLeft) { | |

| if(bit.isEmpty()) | |

| bit = "0"; | |

| else | |

| bit.concat("0"); | |

| } | |

| else | |

| bit.concat("1"); | |

| huffmanCode.put(String.valueOf(huffmanTree.character), bit); | |

| } | |

| getHuffmanCode(huffmanCode,huffmanTree.leftLeaf, bit.concat("0"), true); | |

| getHuffmanCode(huffmanCode,huffmanTree.rightLeaf, bit.concat("1"), false); | |

| } | |

| private static TreeNode getHuffmanTree(int[] countOfCharacters, String input) { | |

| //This priority queue will store tree nodes with lowest frequency character first | |

| PriorityQueue<TreeNode> priorityQueue = new PriorityQueue<>(); | |

| int length = countOfCharacters.length; | |

| int inputLength = input.length(); | |

| int uniqueChaCount = 0; | |

| for(int i = 0; i < length; i++){ | |

| int ascii = i; | |

| int count = countOfCharacters[ascii]; | |

| double frequency = (double) count/inputLength; | |

| if(frequency > 0D) { | |

| TreeNode treeNode = new TreeNode((char) i, frequency); | |

| priorityQueue.add(treeNode); | |

| uniqueChaCount = uniqueChaCount + 1; | |

| } | |

| } | |

| TreeNode huffmanTree = null; | |

| while(priorityQueue.size() > 1){ | |

| TreeNode leftLeaf = priorityQueue.poll(); | |

| TreeNode rightLeaf = priorityQueue.poll(); | |

| TreeNode root = new TreeNode(leftLeaf.frequency + rightLeaf.frequency, leftLeaf, rightLeaf); | |

| priorityQueue.add(root); | |

| } | |

| return priorityQueue.peek(); | |

| } | |

| private static int[] getCountOfCharacters(String input) { | |

| //256 is total number of ascii characters | |

| int [] countOfCharacters = new int [256]; | |

| char [] inputCharArray = input.toCharArray(); | |

| int length = inputCharArray.length; | |

| for(int i = 0; i < length ; i++){ | |

| //Type casting char in integer gives the ascii of the character | |

| int ascii = (int)inputCharArray[i]; | |

| //Increase the count of the character encountered | |

| countOfCharacters[ascii] = countOfCharacters[ascii] + 1; | |

| } | |

| return countOfCharacters; | |

| } | |

| /** | |

| * This class will help to build huffman tree based | |

| * on priority queue | |

| */ | |

| private static class TreeNode implements Comparable<TreeNode>{ | |

| char character; | |

| double frequency; | |

| TreeNode leftLeaf; | |

| TreeNode rightLeaf; | |

| public TreeNode() { | |

| } | |

| public TreeNode(char character, double frequency) { | |

| this.character = character; | |

| this.frequency = frequency; | |

| } | |

| public TreeNode(double frequency, TreeNode leftLeaf, TreeNode rightLeaf) { | |

| this.frequency = frequency; | |

| this.leftLeaf = leftLeaf; | |

| this.rightLeaf = rightLeaf; | |

| } | |

| public boolean isLeaf(){ | |

| if(leftLeaf == null && rightLeaf == null) | |

| return true; | |

| return false; | |

| } | |

| @Override | |

| public int compareTo(TreeNode o) { | |

| if(this.frequency > o.frequency) | |

| return 1; | |

| return -1; | |

| } | |

| } | |

| } |

Result

Output of the code is:

Encoded String is 1011100110

You can see that ‘hello’ is represented as 01101000 01100101 01101100 01101100 01101111 which takes 40 bits. Now the same string is represented in 10 bits.

Another implementation of huffman can be found here.

If you liked this article and would like one such blog to land in your inbox every week, consider subscribing to our newsletter: https://skillcaptain.substack.com

This is a fascinating explanation on how to build and utilize Huffman trees for data compression.

LikeLike